HUMAN COMMAND ARCHITECTURE

Structural Verification of Operational Oversight in AI-Regulated Environments.

ANTROPOLOGIC assesses whether human oversight remains operationally viable under high automation and regulatory pressure.

Compliance can exist on paper. Command must exist in reality.

The Structural Risk

European AI regulation requires effective human oversight.

In many high-automation environments, oversight remains formally present while the effective exercise of judgment weakens.

Intervention is possible. Responsibility is assigned. But decision authority becomes progressively constrained.

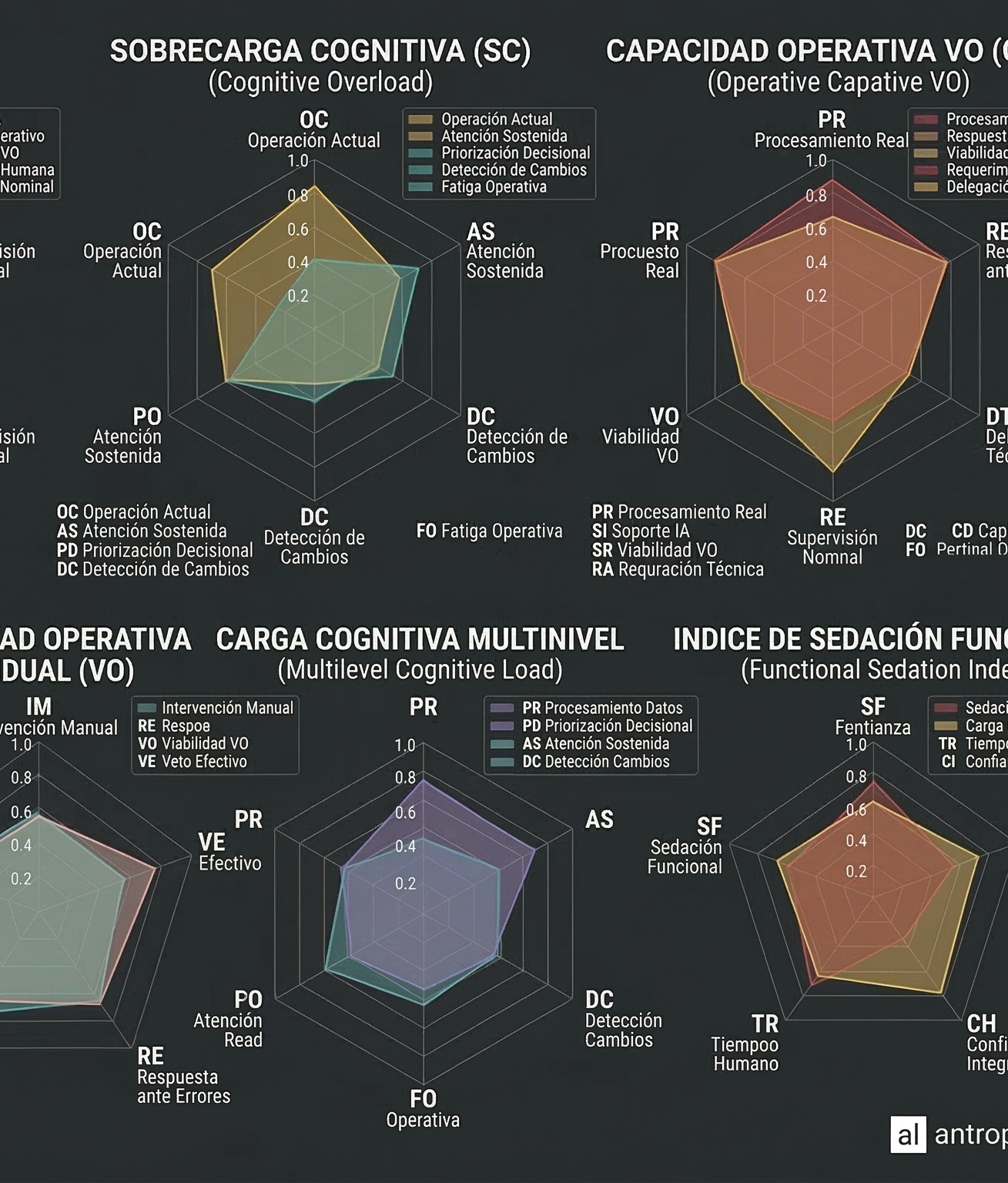

ANTROPOLOGIC defines this condition as Functional Sedation™: A structural reduction of active human judgment in highly mediated systems.

Organizational Consequences

This mismatch generates recognizable effects in people and organizations:

- Strategic decisions that are delayed or blocked without a clear technical cause.

- Growing dependency on systems, procedures, or algorithms without an increase in real clarity.

- Increased personal and organizational stress without proportional operational improvement.

- Deterioration of commitment and effective involvement.

- Repetition of errors that fail to generate learning.

- Difficulty in assuming responsibility within highly mediated structures.

- Increasing vulnerability before regulatory frameworks that demand effective human oversight.

The problem is not technical, but structural and human. THE RISK HAS ALREADY BEEN IDENTIFIED:

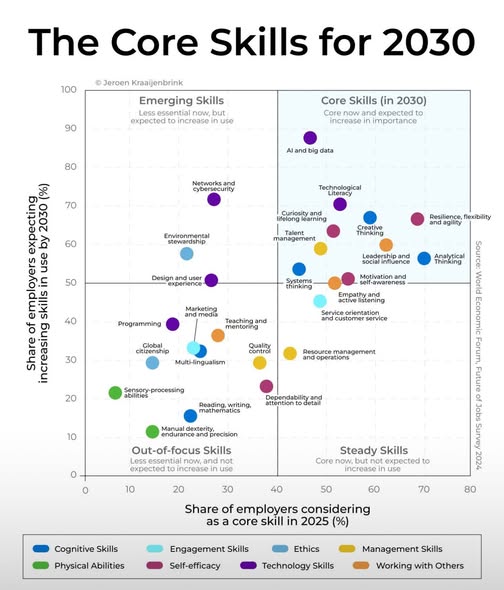

Critical Skills for 2030 and their Structural Fragility

The world’s leading international organizations identify the following as critical skills for 2030:

- Analytical and Critical Thinking

- Resilience and Flexibility

- Ethics and Systems Thinking

- Curiosity and Continuous Learning

These are not «soft skills.»

They are factors of organizational survival in highly automated environments.

However, in contexts heavily mediated by technology and algorithms, these capabilities do not simply disappear:

They weaken structurally without the organization even noticing.

The problem is not a lack of talent.

It is the progressive loss of the effective exercise of judgment.

What ANTROPOLOGIC Does

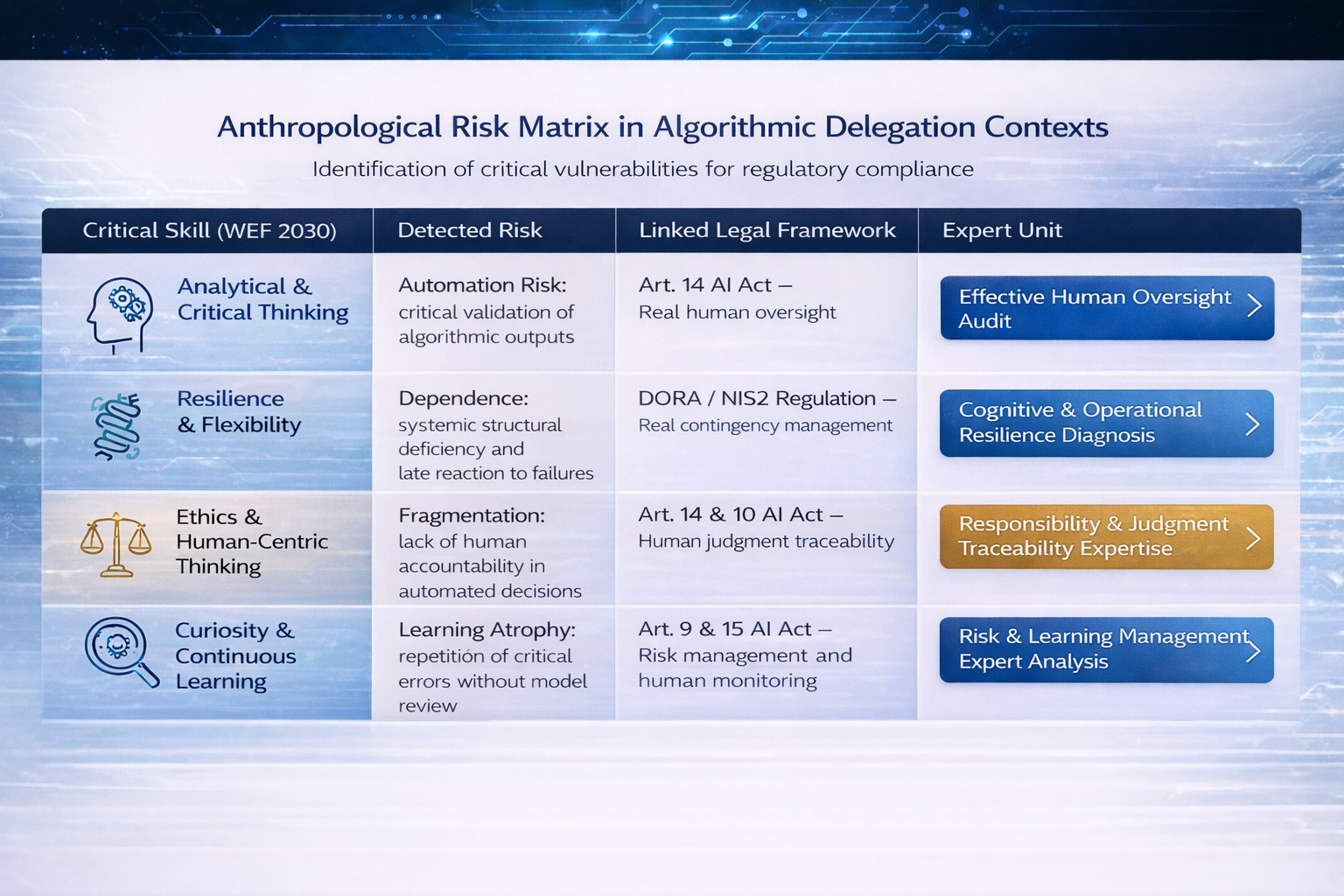

ANTROPOLOGIC translates critical strategic skills—such as analytical thinking, resilience, ethics, and learning—into evaluable variables of operational and regulatory risk.

Today, the weakening of human judgment is not just a cultural issue.

It is a direct legal risk under frameworks such as:

– EU Regulation 2024/1689 (AI Act)

– DORA Regulation (Digital Operational Resilience Act)

– European Liability Directives

Oversight that fails to exercise independent judgment is no longer command: the decision-maker disappears as a subject and becomes just another gear in the machine, assuming legal responsibility for a reality they no longer understand or govern.

ANTROPOLOGIC performs forensic assessments of the real exercise of judgment in environments highly mediated by technology.

We do not audit the code. We audit the capacity for command. We evaluate:

– Whether human oversight is effective or nominal.

– Whether a real capacity for veto exists or only formal validation.

– Whether responsibility is traceable or diluted.

– Whether the organization can operate manually in the event of technical failure.

The result is a structural diagnosis of the state of judgment and organizational resilience.

Our goal is not to improve processes.

It is to determine if command remains human or has been de facto transferred to systems, procedures, or automatisms.

When is an Anthropological Diagnosis Necessary?

Highly automated environments introduce a structural pressure toward Functional Sedation.

Its intensity depends on the interaction between system architecture, organisational culture, and human operators.This interaction allows the viability of human command to be analysed and compared across different operational designs.

Human Command Audit

Erosion of Operational Command: Functional Sedation is a structural tendency;

Human Command Viability is the diagnostic measure of its intensity.

Diagnosis: We assess whether operational authority remains executable in real conditions, or whether autonomy has been structurally reduced.

Defensive Governance: Operational decisions may be technically justified yet repeatedly delayed or excessively validated.

Under regulatory and procedural pressure, judgment becomes defensive rather than directive.

Diagnosis: We evaluate whether authority can act decisively under scrutiny, or whether governance structures inhibit operational command.

Displacement of Judgment: When systems, models, and procedures dominate operational environments, interpretive authority may shift away from the human operator.

Diagnosis: We determine whether judgment remains active and embodied, or whether it has been structurally delegated to automated processes.

Functional Sedation and Organizational Hollowing

Some organizations operate with technical efficiency while suffering from an invisible hemorrhage of commitment and purpose. The system functions by inertia, while human capital becomes disconnected from the real effects of their actions.

Erosion of Identity: Signs of unrest and the turnover of key personnel that do not stem from clear operational causes.

Diagnosis: The problem is not motivational; it is structural. We detect the underlying dynamics that generate burnout and the loss of real accountability.

Anesthesia of Responsibility: The structure remains operational, but real involvement is diluted within processes and implicit consensus.

Human Oversight Risk Diagnostic (AI Act)

The adoption of Artificial Intelligence expands operational capacity but can fragment responsibility and create a critical dependency on algorithmic systems.

Procedural Opacity: When technological mediation increases faster than human understanding, decision-makers may gradually become passive validators of system outputs, losing the practical capacity to detect, question, or intervene in errors.

Diagnosis: We assess the structural impact of automation on decision environments in order to verify whether the human conditions necessary for effective and safe technical intervention remain intact.

Effective Oversight Deficit: This condition may generate regulatory vulnerability under Article 14 of the EU AI Act, which requires that human supervisors retain the real and operational capacity to ignore, override, or reverse automated system decisions.